Difference between revisions of "VLC GPU Decoding"

| Line 21: | Line 21: | ||

== Activation == | == Activation == | ||

| + | To activate such a functionnality, use the VLC preferences. | ||

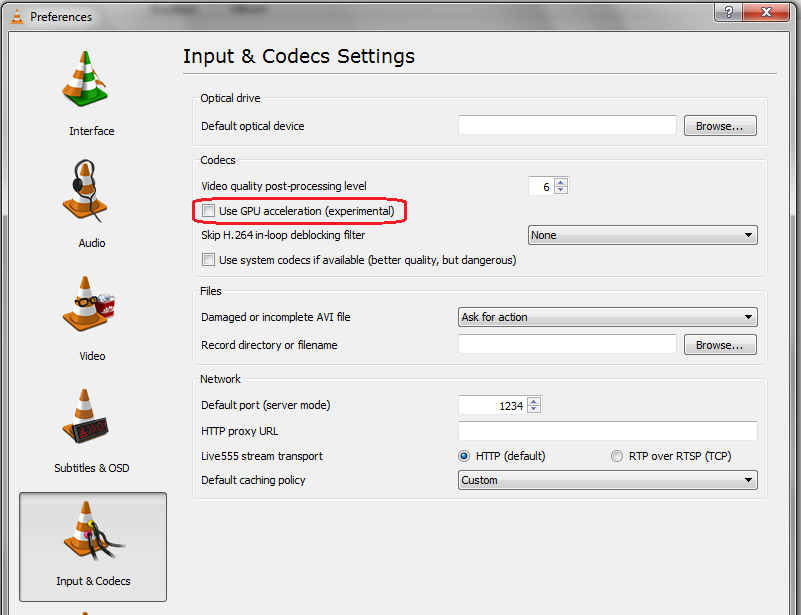

[[File:VLC_GPU.png]] | [[File:VLC_GPU.png]] | ||

Revision as of 19:32, 24 January 2011

Contents

Introduction to GPU decoding in VLC

The VLC media player framework can use your graphic card (aka GPU) to decode H.264 streams (wrongly called HD videos) under certain circumstances.

VLC, in its modular approach and its transcoding/streaming capabilities, does decoding in GPU at the decoding stage only and then gets the data back to go to the other stages (streaming, filtering or plug any video output after that).

What that means is that, compared to some other implementation, GPU decoding in VLC can be slower because it needs to get the data back from the GPU. But you can plug ANY video output (sink) to it and use all the VLC video filters.

Operating System support

Windows

VLC 1.1 supports DxVA in its version 2.0. That means that Windows Vista, Windows 2008 or Windows 7 are required. If you are using Windows XP, VLC cannot work for you yet.

Linux

On Linux, there is code for VDPAU and VAAPI. There is also some code for a VAAPI video output, that isn't merged in the current Git.

Read VLC_VAAPI and [thresh's blog|http://strangestone.livejournal.com/107092.html] for more details.

Mac OS X

Mac OS X (X.6.3) provides a new API for decoding in GPU. This isn't working yet with VLC. Help is welcome.

Activation

To activate such a functionnality, use the VLC preferences.

Requirements for Windows DxVA2 in VLC

To check your DxVA compatibility, please download DxVA Checker

Graphic card compatibility on Windows

nVidia

For nVidia GPU, you are required to use a GPU supporting PureVideo in its 2nd generation (VP2 or newer), which means that you need a GeForce 8, GeForce 9 (advised), GeForce 200 or newer.

We recommend strongly VP3 or VP4 GPU.

To be sure, check your GPU against this table on wikipedia and check if you are VP2 or newer.

ATI

For ATI GPUs, you NEED Catalyst 10.7, that is just out.

Then, you are required to use a GPU supporting Unified Video Decoder.

We believe you need a GPU supporting UVD2, like HD4xxx, 5xxx or 3200. One might have success with UVD+ GPU, like some HD 3xxx, but this isn't tested.

Intel

We haven't tested any Intel implementation so far.