SoC 2008/x264 Improve Fast Inter Refinement and Adaptive Quantization

This project is part of Google Summer of Code 2008.

|

Abstract

The goal of this project is to improve heuristics and decision-making for inter refinement in order to improve efficiency given average encoding settings. This will involve various early termination heuristics along with methods of deciding which partition modes need to be searched while performing minimal actual searching on them. I also plan to experiment with different methods that can be used to improve psycho-visual optimizations for mode decisions and quantization. This will include improving variance adaptive quantization by experimenting with different methods which could be used to weight the variance in order to select a more optimized quantizer.

Goals

Goals:

- Improve inter prediction algorithms through the addition of early termination heuristics

- Analyze the costs of using the different reference frames and partition modes.

- Uses these results to create rules for early termination in order to avoid exhaustive searches.

- Explore the usage of texture and shape in inter prediction

- Implement curvature/first derivative algorithms (edge detection).

- Compare edge count with the costs associated with the reference frames and partition modes.

- Explore other metrics of texture/shape and their usefulness in this situation.

- Implement early termination heuristics that depend on these texture and shape metrics.

- Improve psycho-visual optimizations for mode decisions and quantization

- Explore methods for SSIM-QNS optimization.

- Adaptive dead zone / lambda.

- Make improvements upon the Adaptive Quantization algorithms to achieve higher visual quality.

- Investigate the usage of NSSE and noise shaping techniques.

- Explore the usage of curvature as a means of weighting the variance in order to achieve a more optimized quantizer.

- Determine the most effective metric or combination of metrics.

Weekly Progress

Week 4

Due to university commitments this was my first real week working on the project. I have primarily spent this week getting more familiar with the x264 code. To help familiarize myself with the code I have implemented a fast inter mode search algorithm which was inspired from an IEEE conference paper.

Here is the pseudo code for the search method. The patch can be found here

analyse_mode_16x16()

threshold1 = 48 * pow(1.12246,qp)

threshold2 = threshold1 + lambda2*192

if ( cost_16x16 < threshold1 )

{

done

}

else if ( cost_16x16 < threshold2 )

{

analyse_mode_16x8()

analyse_mode_8x16()

}

else

{

analyse_mode_8x8()

if ( cost_8x8 < cost_16x16 )

{

if( frame_type == B_FRAME )

done

else

analyse_mode_sub8x8()

}

else

{

analyse_mode_16x8()

analyse_mode_8x16()

}

}

I am currently running many tests comparing the current algorithm with the proposed algorithm to see where it can be improved. Overall I am conducting 36 tests which are being driven with this bash script. The script compares the results of a patched x264 and an unmodified x264 on two different sources with 3 different bitrates (1500,1000,500), 3 different numbers of reference frames (8,4,1), as well as with and without mixed-refs. Results will be posted shortly!

Week 5

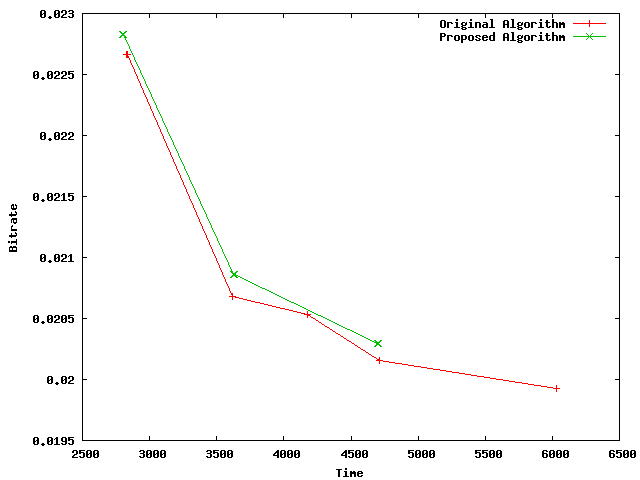

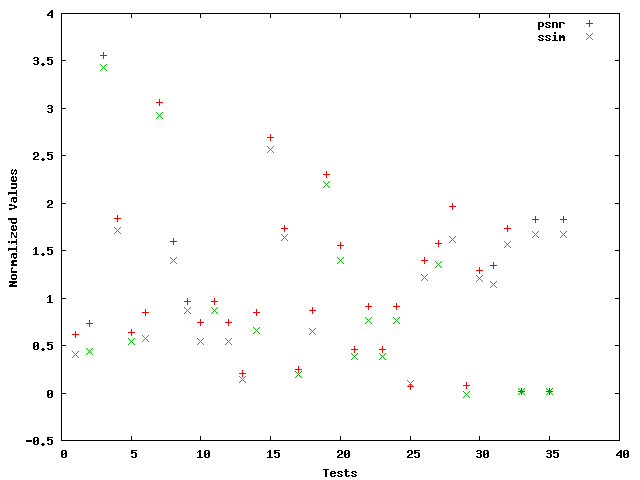

The tests have finished and below is a graph comparing the execution time and bitrate between the two algorithms. As you can see, the proposed algorithm does not provide any bitrate improvement over the original algorithm. This with the combined with a quality improvement analysis (the second graph) which shows a maximum quality decrease of 3.5% further proves that this method is not a viable method for making inter mode decisions.

Although this method does decrease the overall execution time, the effective bitrate increase and quality loss do not justify the savings in time.

Next I attempted to come up with a method for speeding up the ref search in x264_mb_analyse_inter_p8x8_mixed_ref. After looking at some of the reference costs I came up with a very simple method for skipping searches: if the cost of the current ref is greater than the previous ref two times in a row then stop searching.

To compute the effectiveness of this method I first compute the percentage of compression improvement gained by using mixedrefs Y% = 100 * (1 - sum satd for best ref / sum satd for ref 0). Then the time saved using the proposed method T% = 100 * (1 - # of ref searches / max refs) and the compression cost C% = 100 * (sum of satd for selected refs / sum of satd for best ref - 1).

For the LosslessTouhou source I have found Y% = 6.6, T% = 74%, and C% = .4.

Upon further analysis I found that it is somewhat likely that the best ref is two away from the selected ref. When tested I found T% = 73 and C% = .1

Schedule

All finals will be finished June 10th and starting June 11th I will be working full time. I will be camping sometime in late June for 1.5 days and possibly once more later in the summer. Other than that I do not plan to take any more vacation.

School starts back up ~28th of September so if all goes as planned my project will be completed by then :]